Due to some software systems being critical for business operations, a defect-free release is required.

Hence, additional to automated tests, there is always an extensive phase of manual testing before a release goes live. Testing is performed by an internal testing team or by a 3rd-party testing vendor.

You own the testing budget. You need to make sure that every cent has the highest effect on defect-risk mitigation. In addition to a limited budget, you might also have limited time for testing a release candidate before going live.

Today, you follow a white-box testing approach, i.e. run test cases associated with the requirements implemented for a release candidate. This is costly because you must run the entire test suites – even if there is no actual risk behind some code changes.

Sometimes, you need to skip some test cases because of a limited testing time. But without being able to properly locate the risk within a release candidate, you’ll likely skip the wrong test cases and defects slip through.

Coding work on functionality A may have side-effects on functionality B if they share the same code. Without analytics on code and coding activity, you wouldn’t know about those side-effects and defects would slip through.

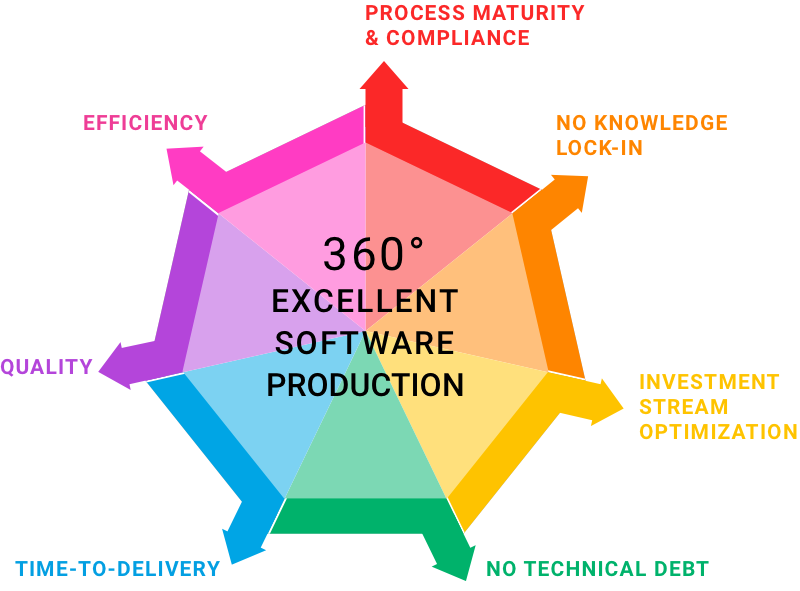

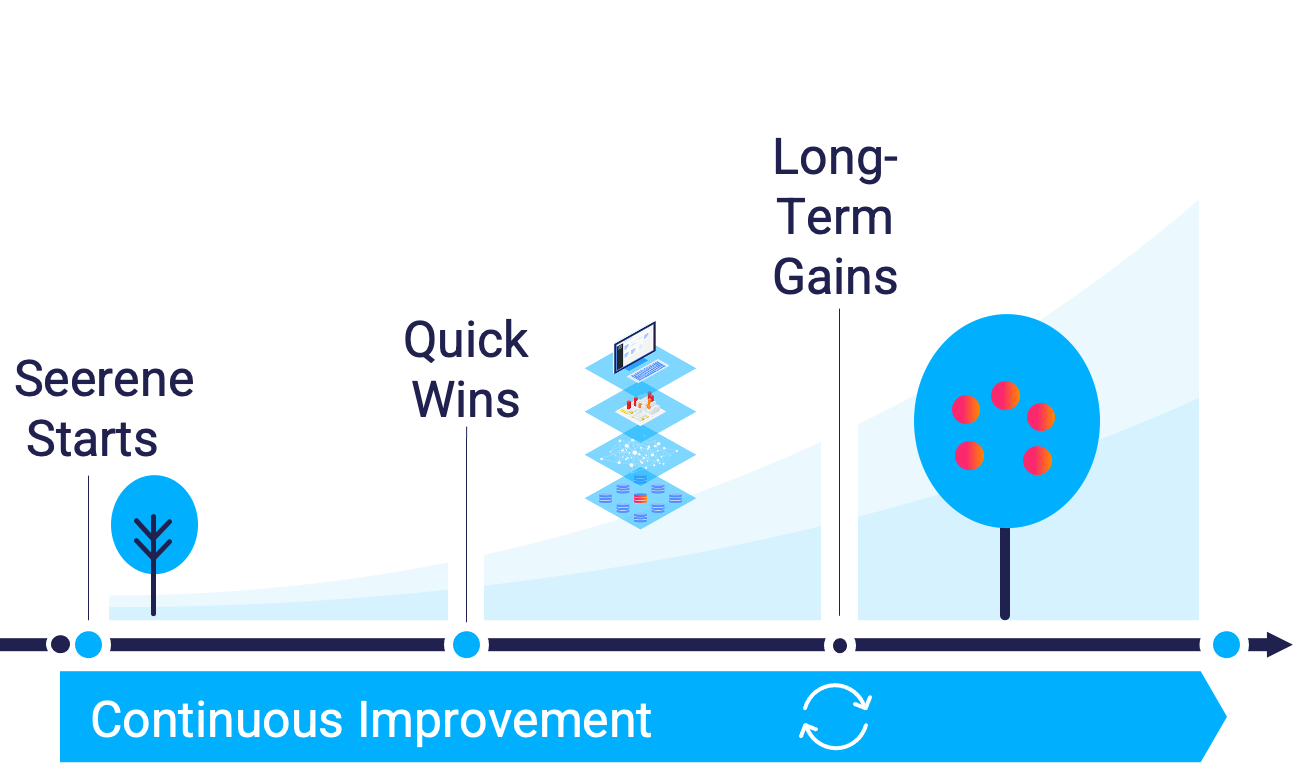

With Seerene's KPIs interpreting analytics on code and code changes, you can know which requirement or change request bears the biggest risk of defects. You can mitigate the risk with less testing effort.

With Seerene's analytics on code coverage by manual and automated tests, you would see how well functionality X is already secured by automated tests and where the blind spots are that still need to be covered by manual tests.

Seerene's analytics can reveal the architectural code footprint of a functionality and tell how strongly it is affected in the current release candidate. This way, you would even grasp possible side-effects caused by usually neglected code cleanups.

August-Bebel-Str. 26-53

14482 Potsdam, Germany

hello@seerene.com

+49 (0) 331 706 234 0

Generative AI Seerene GmbH

August-Bebel-Str. 26-53

14482 Potsdam, Germany

hello@seerene.com

+49 331 7062340