Table of Contents:

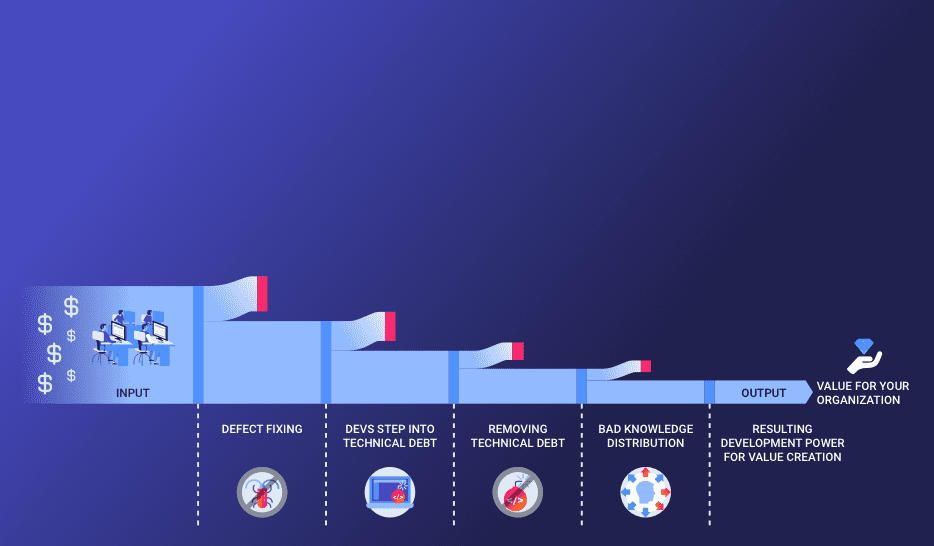

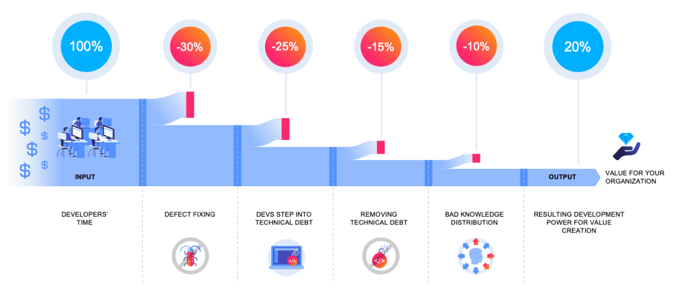

As many executives who are responsible for a software development organization painfully know, only a small fraction of the invested budget for software development can be used for creating what the software users want: value-adding features. The rest of the time and money is being eaten up by inefficiencies. The typical numbers you find in the real-world corporates are frightening high: Imagine you have a budget of 10 million Euros to spend on software development and only 2 million Euros can be converted into value creation (see Image 1). No other industry domain would run their business so inefficiently. But why is software development so difficult? And where is all the time and money gone? The reasons are manifold. We will elaborate on this in multiple articles. In this article we will do a deep dive into the number one time-killer for developers: defect fixing.

Image 1: What is using up your software development budget?

Obviously, defects create even more damage in addition to the inefficiency aspect. Dissatisfied users lead to less revenue, cancelled contracts and reputation loss. As daily headlines in the newspapers prove (e.g. Lidl software disaster) software crisis and software failures are common problems.

In a series of articles, we examine the software development process with all its flavors (waterfall vs. agile; projects vs. products; …) and show you how analytics methods can help you to better monitor, steer and optimize your software development organization.

Find out more about how you can successfully implement software analytics in your company.

In a nutshell, the purpose of a software development organization is to deliver user value (see Image 2). This is not a trivial task for the one or the ones who are responsible for the organization because it means to juggle multiple, oftentimes contradicting challenges and to spend the given budget wisely. One major challenge is to make the organization run efficiently. The budget that you have received from your customer (the business-side of your company or whoever the sponsor of the next software release is) should be converted into maximum added user value in the next release of your software.

Image 2: The purpose of a software development organization is to deliver user value.

Image 2: The purpose of a software development organization is to deliver user value.

Running an efficient software development organization means that the money you spend does not experience loss and can be used 100% for user value creation (software innovation). Developers are typically the largest cost factor in a software development organization. Hence, we will focus on understanding and optimizing the developers’ activities so that they can work as productively as possible without experiencing friction.

The bad news is that developers can typically use only a fraction of their time for creating user value because of multiple reasons. Those reasons are multi-faceted and oftentimes originate from situations where developers have to cope with difficult code (see Latoza et al.)[1]. The good news is that if you can pinpoint the root causes for this, you have a mighty leverage point to convert your inefficiencies into “extra power”. Usually, the friction in the process is on such a high level so that getting, for example, more than 30% additional productivity is just grasping the low hanging fruits.

Image 1 shows the flow of input and how it is converted into output. The input is the developers’ time that is “bought” by your budget. The output is added user value being contained in a new software release. Now, let’s discuss what usually happens on the way from input to output and where inefficiencies may happen.

Productivity killer number one during software development are defects. If a defect is found during testing -or worse- by the users, the developers’ focus in the upcoming development cycle is distracted from “value add” and a significant amount of coding effort needs to be spent for fixing the defect. Typical numbers that we have found throughout our customer base ranges from 20–40% of the coding effort that is wasted by defect fixing. As bad as this sounds, this is a sleeping potential for your organization from which you can easily create 10–30% extra power — if you have the means to tackle the problem.

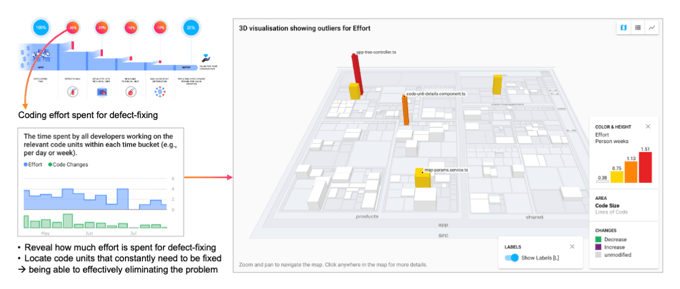

Monitoring and Steering: Quantifying the as-is-problem

Academic research on applying analytics to solving software engineering problems has led to stable and industry-scale applicable analytics methods that can reconstruct the amount of coding effort (developer time) spent for the various development tasks and how the effort is distributed across the code architecture. The analytics approach is based on the data found in the code repository and does not require the burden for developers to manually track their time.

With this approach, we can derive the “KPI Effort for Defect Fixing” (see Image 3). This is a perfect, interpretation-free steering KPI. Obviously, the goal of an efficient organization would be to minimize this KPI towards zero.

Image 3: Coding effort spent on defect fixing

Becoming actionable: Identifying and tackling the root causes

But analytics methods do not only allow for monitoring this target KPI that reflects how bad the situation is right now. Additionally — as the KPI information is based on the raw ground-truth data that is found in the organization’s technical repositories — it provides precise root cause analysis to reveal the origin for the situation. The effort for defect fixing activities can be located in the code architecture. In doing so, it reveals which part of the architecture or even which code file is a constant source of defect problems. With this knowledge, you can work like a “surgeon” and execute small, precise and focused code improvements in the next development cycle — with the reward of having removed the smoldering problem hotspot.

You can even do further forensics and look at what happened to the problematic code locations in the past. Which team was involved here? Do they need support by more experienced developers? Is the delivery pressure too high so that they don’t have time to do it right?

Proactive risk mitigation: Securing code changes with automated tests

The earlier a defect is found, the less expensive it is to be fixed. The worst case is that users experience defects. In a good case, a defect is found during testing before shipping the release. In the best case, the developers themselves run automated unit tests and catch unintended side-effects of their code changes.

Analytics methods can also take code coverage into account, that is, the information of how thoroughly each code file is covered/executed by automated tests. If such data is then fused with further aspects of the code such as its complexity or the amount of effort that has been invested into it. This way, analytics methods can provide a highly effective way to quantify the risk that is contained in a code unit, e.g., “effort-intensive construction place within high code complexity”. It also entails the risk mitigating effect of the tests “Do the tests cover this code or do they only secure the nice code left and right?”.

We have elaborated a deeper view on why software development is such a difficult discipline and why the ones responsible for a software development/delivery organization oftentimes struggle. One dimension in the multi-fold challenges that a responsible has to master is: Efficiency. That is, to make sure that 100% of the organization’s “input money” is transformed into 100% “output value-adding software features”. On the way from input to output are many traps and pitfalls that let developers burn their precious times. In this article, we have taken a deep-dive into the number one time-killer “Defect Fixing”. And we have shown how — with the help of analytics — it is possible to get a grip on this problem both from a management and steering perspective as well from an operational doing perspective. Analytics methods can quantify the problem by means of KPIs and additionally allows to identify the root causes in the code and coding activities so that concrete actionable insights are derived to improve the situation. By this, a development organization can free up a large portion of their wasted development time and obtain a significant amount of “extra power” — literally out of nothing. With it, the organization can deliver much more user value with the same amount of input money. Find out more about how you can successfully implement software analytics in your company.

[1] LaToza, Thomas D., Gina Venolia, and Robert DeLine. “Maintaining mental models: a study of developer work habits.” Proceedings of the 28th international conference on Software engineering. ACM, 2006.

These Stories on News

August-Bebel-Str. 26-53

14482 Potsdam, Germany

hello@seerene.com

+49 (0) 331 706 234 0

Generative AI Seerene GmbH

August-Bebel-Str. 26-53

14482 Potsdam, Germany

hello@seerene.com

+49 331 7062340